# PieAPP

PieAPP: Perceptual Image-Error Assessment through Pairwise Preference.

🌏 Source

Available at https://arxiv.org/abs/1806.02067, source code available at: prashnani/PerceptualImageError.

# Brief introduction

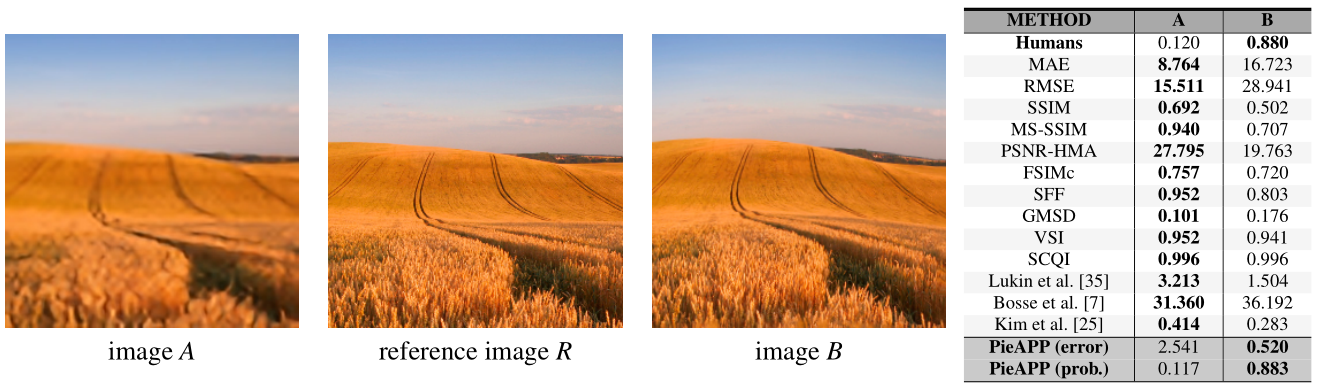

- The paper proposes a new, large-scale dataset labeled with the probability that humans will prefer one image over another.

- Then the paper trains a deep-learning model using a novel, pairwise-learning framework to predict the preference of one distorted image over the other.

The new metric: PieAPP, correlates well with human opinion, and performs almost 3 times better than existing metrics.

# Main contributions

Dataset: The paper doesn't explicitly convert the human preference into a quality score. Instead, they simply label the pairs by the percentage of people who preferred image

Pairwise-learning framework: The framework trains an error-estimation function using the probability labels in the dataset.

--------------------- | Distorted Image A | \ --------------------- \ \ --------------------- \ | Error estimation function #1 | | Perceptual Error Score: A | | Reference Image R | -->-- |--------- IDENTICAL ----------| -->-- |---------------------------| --------------------- / | Error estimation function #2 | | Perceptual Error Score: B | / --------------------- / | Distorted Image B | / ---------------------Then the errors of

Once the PieAPP (described above) is trained using the pairwise probabilities, we can use the learned error-estimation function on a single image

# Method overview

# Experiments

# Referred in

- papers

- | Paper Title | Publication | Source Code | | perceptual-similarity | CVPR 2018 | richzhang/PerceptualSimilarity | | pieapp | CVPR 2018 | prashnani/PerceptualImageError |